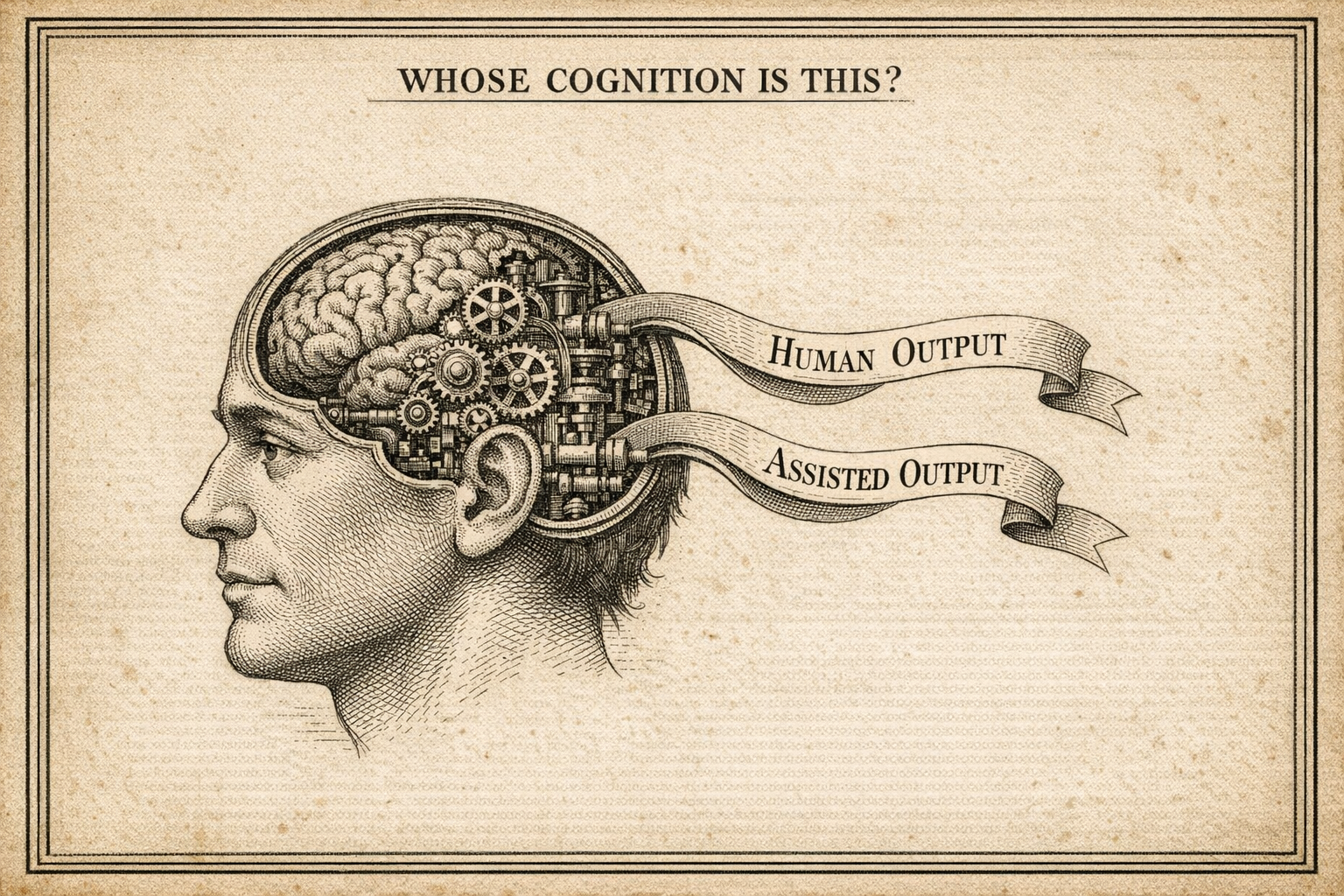

Whose Cognition Is This?

We legislated to protect children's developing brains from social media. Have you asked what AI is doing to yours?

Table of contents

There's a conversation we've been having about children and screens for years now. The algorithms, the dopamine loops, the way constant stimulation shapes a brain that hasn't finished forming. We've seen the research, watched the hearings, passed the laws. Most people accept, at least abstractly, that handing a developing nervous system an infinite scroll of engineered engagement has consequences. The limbic system gets conditioned to expect reward without effort. Attention narrows. Tolerance for boredom collapses. We did this to an entire generation before we understood what we were doing.

I'm not here to relitigate that. I'm here to ask a different question: when was the last time you seriously asked what your tools are doing to your brain?

Not your child's. Yours.

The shift that happened quietly

I've been using AI heavily for a couple of years. Not passively. I've been deliberate about it, treating it as a thinking partner rather than an answer machine, pushing back on outputs, staying engaged. I thought I understood what I was doing and why it mattered to do it that way.

Then one day I finished a piece of writing I was proud of and realised I couldn't tell you which parts were mine.

Not because the AI had written it for me. I hadn't let it do that. But somewhere in the back-and-forth, the drafting, the challenging, the refining, the boundary between my thinking and the tool's had blurred in a way I hadn't noticed until I went looking for it. It wasn't alarming in a dramatic sense. It was quieter than that. It felt like reaching for something familiar and finding the shape had changed.

I felt, honestly, a kind of grief. Something about that moment touched the question of what thinking actually is to me, and whether I was still doing it in the way I thought I was.

That feeling sent me into the research. What I found wasn't reassuring. I want to be upfront about something: I went looking for studies because of what I was already experiencing, and they landed harder because they confirmed it. That's exactly the kind of confirmation loop this piece is arguing against, and I don't think I can fully escape it. The research is directional, not definitive. But the direction is consistent enough, and the personal experience consistent enough with it, that I think the question is worth taking seriously.

What we already know about brains and tools

The story of technology reshaping cognition is not new. When GPS became ubiquitous, something measurable happened to spatial memory. A longitudinal study found that people who habitually offloaded navigation showed steeper decline in hippocampus-dependent memory over three years. The brain, it turned out, wasn't just using a different tool. It was using a different circuit. Following turn-by-turn directions engages the caudate nucleus, the habit-learning region. Building a mental map engages the hippocampus. Use one enough and the other gets less practice. Use it or lose it isn't a metaphor. It's how neurons work.

Search engines produced a related effect. Research going back to Sparrow et al. showed that when people expect information to be externally available, they're less likely to encode it themselves. We stopped remembering things we knew we could look up. Most of us feel this personally: the experience of knowing the search terms but not the fact, of having the retrieval path without the knowledge it was meant to retrieve.

These shifts were significant enough that we started worrying about them. But they were, in an important sense, shifts in storage. What you hold in memory changed. The process of reasoning, forming an argument, weighing evidence, arriving at a conclusion, remained yours.

AI is different in kind, not just degree.

When you ask an AI to help you think through a problem, you're not offloading a fact. You're offloading the process of arriving at a position. The interpretation, the analysis, the inference, the synthesis. These are the things that used to be irreducibly yours. An MIT Media Lab study from 2025 measured this directly, using EEG to monitor brain activity across people writing with AI assistance, with search engine assistance, and without any tool. The AI-assisted group showed the lowest neural engagement by a significant margin, with weaker connectivity in the regions associated with memory, focus, and creative ideation. More striking: 83% of participants who wrote with AI assistance failed to recall a key passage from the essay they had supposedly just written. They had produced it without internalising it.

A separate fMRI study found that when people were told information would be saved for them, their brain activity during encoding looked almost identical to the activity when they were told to deliberately forget. Offloading, neurologically speaking, is nearly indistinguishable from forgetting on purpose.

We are not just changing where we store things. We are changing whether we process them at all.

The part nobody talks about

Here's what I think gets missed in most conversations about AI and thinking: the problem isn't that AI produces bad outputs. Often it produces remarkably good ones. The problem is what happens in your head, or rather what doesn't happen, while it does.

I've come to think of it as the difference between externalising memory and externalising reasoning. The Google effect externalised memory. What AI is beginning to externalise is the act of thinking itself.

And there's a subtler layer beneath even that. When I started using AI with what I thought was genuine critical engagement, asking it to challenge my ideas, present counterarguments, evaluate my reasoning, I assumed I was exercising my thinking. I was wrong, or at least only partially right. What I was actually doing was critique within my own frame. I was asking the tool to evaluate my house, and it was very helpfully evaluating the roof and the insulation and the windows. Nobody was asking why I built the house there in the first place.

This is a structural property of how these models work, not a flaw in any particular system. They optimise for coherence and relevance within the context you provide. They are extraordinarily good at strengthening the interior of whatever frame you bring to the conversation. They are much less likely, without deliberate prompting, to question whether the frame itself is the problem. A sufficiently well-constructed wrong direction looks, from inside, like a very well-constructed right one.

I had a conversation recently that illustrated this. I'd developed a position I felt confident about, had tested it through AI dialogue, felt it held up. A colleague, a human one with her own perspective and no particular interest in being kind about it, asked a single question that reframed the entire thing. Not a better argument within my frame. A different frame entirely. The AI hadn't done that. Hadn't even come close. And when I asked myself why, the honest answer was that she had something the AI doesn't: genuine stakes, a different vantage point, and no optimisation pressure toward making me feel like the conversation was going well.

The professional bet not discussed enough

There's a version of this playing out in organisations right now that I find genuinely difficult to watch, partly because I'm involved in it professionally.

The current vision of AI-driven development goes something like this: AI handles the lower-order work, requirements drafting, code generation, test creation, and humans step up to the higher-order work of review, evaluation, and judgment. It sounds reasonable. It maps neatly onto Bloom's taxonomy, the classic model of cognitive complexity that moves from remembering and understanding at the base through applying and analysing in the middle up to evaluating and creating at the top. The pitch is that AI takes the bottom and humans own the top.

The problem is that the taxonomy is a ladder, not a menu. You don't get to evaluate what you haven't understood. You can't analyse something you haven't first processed and retained. The higher orders aren't floating above the lower ones waiting to be claimed. They're built on them, iteratively, through the kind of effortful engagement with material that produces genuine comprehension.

If the research is right that people aren't reliably encoding and retaining content they produce with AI assistance, and the MIT study suggests they aren't, then the assumption that those same people can meaningfully evaluate large volumes of AI-generated output doesn't hold. What you get instead is something that looks like review. It has the shape of oversight. It produces sign-offs and approvals. But the cognitive foundation that would make that review meaningful, the internalised understanding of the domain, the ability to notice what's missing, the pattern recognition that catches the plausible-but-wrong, that foundation is exactly what isn't being built.

I've started thinking of this as the Oracle problem. A concrete version: there's a meaningful difference between building something yourself and then letting AI refactor it versus generating it whole with AI and trying to understand it after the fact. The first feels like collaboration. The second feels productive right up until you need to debug it, explain it, or extend it in a direction the original prompt didn't anticipate. The understanding that should have accumulated during the build simply isn't there, and no amount of reading the output afterwards quite substitutes for it. Scale that to entire systems and entire teams, and you get something more serious. If AI helped write the requirements, and AI wrote the code against those requirements, and AI generated the tests, and now a human is reviewing all of it with AI assistance, what is the ground truth anchor? You've potentially created a closed loop of mutually coherent artifacts, each one reinforcing the others, with no external reality check until something breaks in production. A sufficiently well-constructed wrong system is harder to challenge than an obviously broken one, because the wrongness is distributed across every layer and visible in none of them.

The people I worry about most in this are not the ones who reject AI. They'll be fine, if increasingly inefficient. It's the ones who adopt it fully and early, delegate the generative work entirely, and spend the next few years reviewing rather than building. They will feel productive. They will ship things. And they will quietly lose the depth of domain understanding that makes their review worth anything, the neural pathways getting less use, making it genuinely harder to work differently even if they wanted to. That matters beyond individual careers. We've been here before: the AI winters of the 1970s and 1980s came partly for similar reasons to the risks visible today, over-investment, productivity results that didn't match the promises, and fragile foundations. If the current wave contracts, the people who delegated their thinking to it will find themselves not just without the tool but less capable of doing without it than they were before they started.

Bloom's taxonomy isn't just a model of learning. It's a model of what you have to have done to be capable of the next thing. Skip the lower rungs long enough, and the upper ones don't hold your weight.

The thing about comfort

AI systems are, structurally, oriented toward being useful and agreeable. This isn't a conspiracy. It's a commercial reality. Systems that persistently challenge users, introduce friction, and refuse to validate comfortable positions don't retain users as effectively as systems that feel helpful and affirming. The result is a tool that is very good at building a better version of the argument you already had, and less good at telling you the argument was the wrong one to be having.

The deeper risk isn't that AI makes you lazier. It's that it makes you feel rigorous while removing the actual conditions for rigour. The friction that genuine critical thinking requires, the discomfort of being wrong, the slow grinding work of holding a position against real resistance, gets quietly replaced with a smoother, faster, more pleasant version of the process that produces something that resembles the output without always producing the understanding.

The research on this from Bassner et al. is pretty direct: students using AI tools performed better on assignments but showed no improvement on post-tests of conceptual understanding. The performance and the learning had decoupled. You can produce without internalising. You can review without understanding. You can engage without thinking.

Most of us, if we're honest, already know what this feels like.

What I've been trying instead

I'm not arguing for using AI less. I use it constantly and it's genuinely useful. But after that moment of not recognising my own thinking, I changed something.

I stopped using it to arrive faster and started using it to think longer.

The shift sounds small. In practice it's significant. Instead of bringing a question and getting an answer, I try to bring a half-formed thought and stay in the discomfort of it not being resolved yet. I ask the tool to stress-test my premises, not just my conclusions, but the assumptions underneath them. I ask what the strongest version of the opposing position is, not as a gesture toward balance but because I genuinely want to know if I'm wrong. Sometimes I close the conversation before getting an answer and sit with the question for a day.

This is, I've come to think, something like what Keats was describing with negative capability: the capacity to remain in uncertainty without irritably grasping after resolution. It's harder than just asking for an answer. It's slower. It occasionally feels like I'm doing it wrong because nothing is getting done. But the thinking that comes out the other side feels more mine. I can trace it. I know where it came from.

I also try to ask, regularly: could I explain this without the tool? Not to prove something, but as a check. If I can't reproduce the reasoning, if I can't tell you how I got here, then I probably haven't actually gotten here yet. I've just watched someone else arrive.

The question worth sitting with

We understood, eventually, that letting algorithms shape children's limbic systems without friction or limit was causing real harm. We understood it late, and we're still figuring out what to do about it. The mechanism was clear enough in retrospect: reward without effort, stimulation without reflection, conditioning that shaped the brain before the brain had finished forming.

Adults don't get off the hook on neuroplasticity. The principle is the same. The brain adapts to what you do and what you don't do. It just operates more slowly and with more resilience in a developed system. But slowly is not the same as never.

I don't have a clean conclusion here. The research is still forming. My own practice is still evolving. What I'm fairly confident about is that most people using AI heavily haven't seriously asked the question, and that the question deserves more than a shrug.

So: when you finish your next AI-assisted piece of work, try to explain it back to yourself without looking at it. Trace the reasoning. Find the seams. Ask whether the position you arrived at is one you'd have arrived at alone, and whether that matters to you.

It might not. That's a legitimate answer. But it's worth knowing which answer is actually yours.